Contemporary video games have increasingly resembled actual cinematic blockbusters. The advanced graphics not only enable the creation of intricately detailed worlds but also facilitate lifelike facial expressions and animations.

Keanu Charles Reeves, Idrissa Akuna “Idris” Elba, Norman Mark Reedus, and numerous other actors are now fully embodying roles, not just participating in projects or lending their voices to characters. Motion Capture technology has soared to new levels of sophistication. What exactly is this technology, and how did it come about? These questions will be addressed in the article.

Stages of development

The prototype of Motion Capture made its debut in the early 20th century. Animators sought methods to streamline production, leading to the invention of rotoscoping by Max Fleischer. The concept was both straightforward and intricate. Initially, scenes were recorded with live actors and standard backdrops, after which artists traced over the footage using special lenses to create new frames. Despite its groundbreaking nature, the process was far from easy, requiring up to three years to produce just one minute of the first animation.

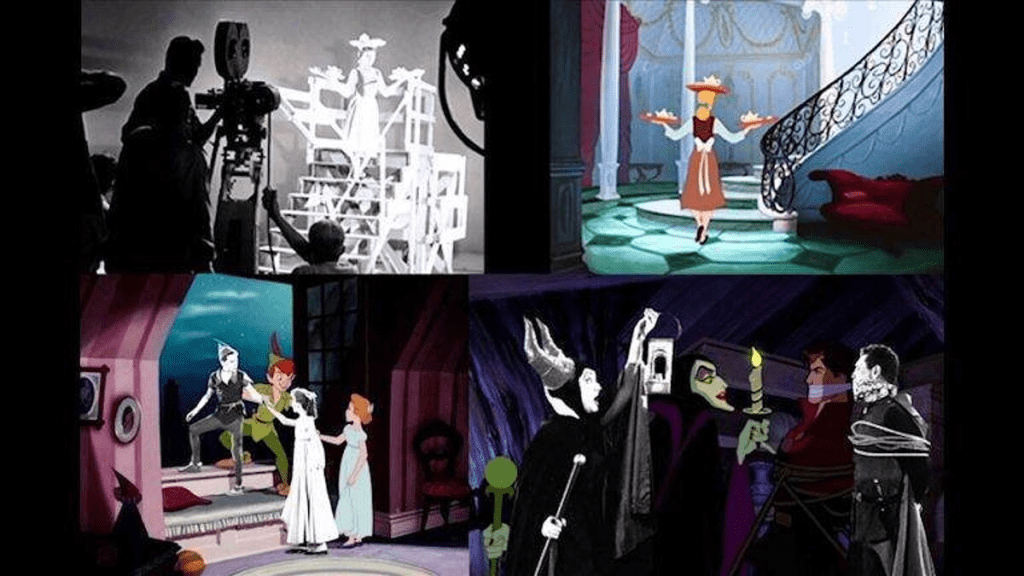

Years later, advancements in technology allowed for accelerated production processes. Rotoscoping became popular in both the United States and the Soviet Union. This technique was employed in the creation of Disney’s “Cinderella” and “Alice in Wonderland,” as well as the Russian “Snow Queen” and various other works.

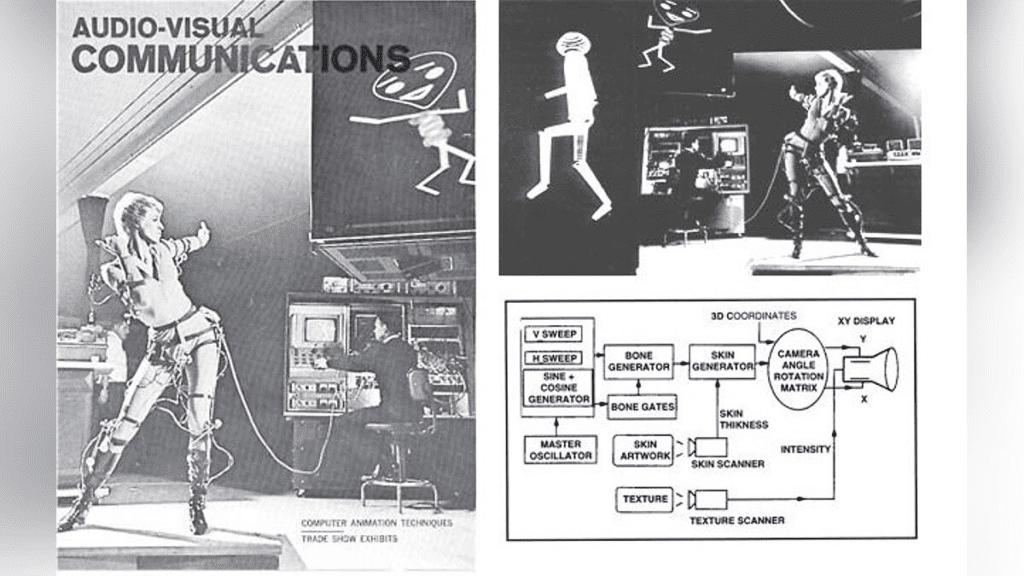

Over time, computers have increasingly infiltrated every aspect of human life. The first device capable of full motion capture was invented in 1962. Although the quality was not what contemporary audiences and gamers expect, it represented a significant milestone. The technology even found its way into television commercials, signifying its widespread adoption.

The LED suit marked a significant advancement in mockup development, serving as a key instrument for translating human motion into the digital realms of gaming and film. This technology has continued to evolve, incorporating various enhancements. Motion Capture, in particular, has integrated several supplementary technologies, enabling the refinement and expansion of data captured by cameras that track markers placed on an actor’s key joints. How does this process function in detail?

Technical Base

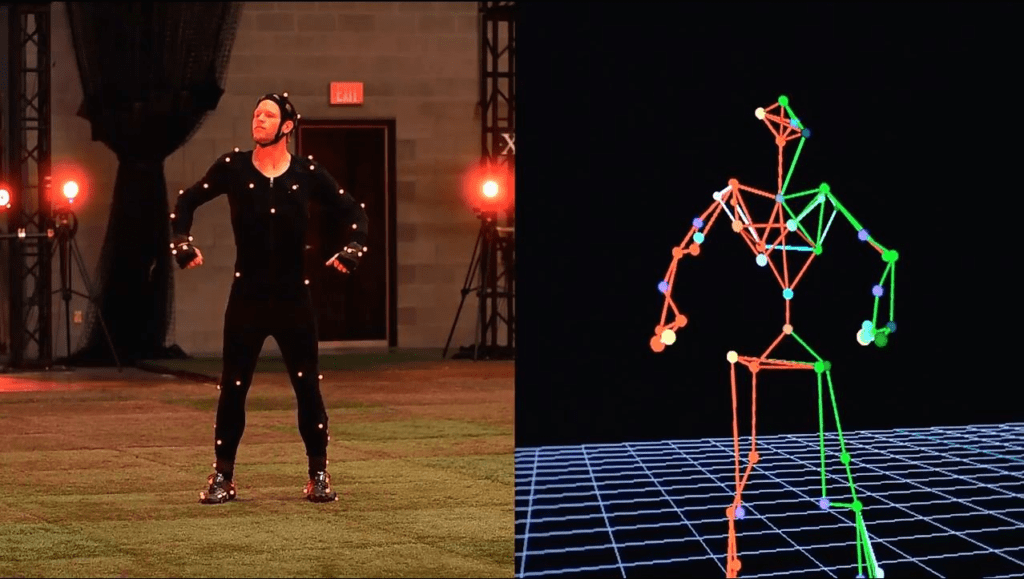

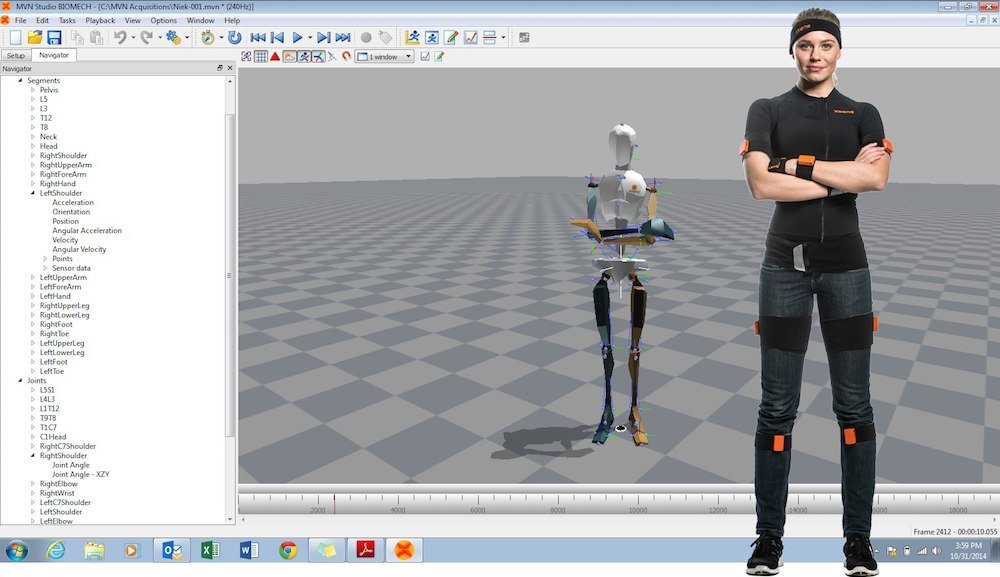

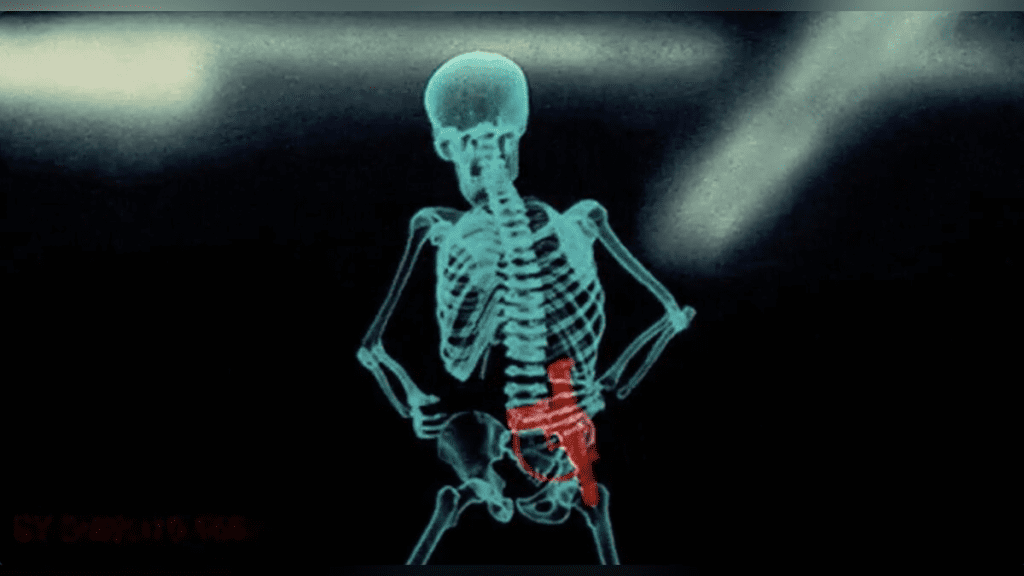

Traditionally, motion capture relies on three fundamental components | a sensor-equipped suit, cameras that capture movement, and specialized software. As the quality and cost of the equipment increase, the character’s skeletal formation and the precision of each micro-movement capture improve correspondingly.

The distinction also lies in the types of sensors utilized. Not all are LEDs; some are magnets, miniature gyroscopes, or even complete exoskeletons, yet optical fixation remains the prevalent choice. A cost-effective method involves passive tags affixed to the body, reflecting light from numerous cameras. However, this approach has clear limitations, as varying sets or multiple actors can cause confusion for the equipment, resulting in mixed or lost labels. Nonetheless, there is a solution.

Active LEDs are unique in that they emit their own light. Consequently, they require connection to a power source, leading to numerous batteries being incorporated into the suit. Moreover, each tag is assigned a specific number, aiding cameras in distinguishing data and yielding a clearer image. With this system, even partial visibility of the body in the camera’s lens is not an issue, as the cameras track the diode numbers and adjust swiftly. Although more costly, this technique is increasingly favored in video games, where actors perform dynamic action sequences, move rapidly, and interact with one another.

While the fundamentals of mockups have stayed the same over the years, technological advancements continue. Initially, a significant challenge was that sensors were attached to the body rather than the bones, making it difficult to recreate an accurate skeleton. However, this issue has been addressed over time.

New technologies often emerge from sectors not traditionally associated with gaming and film. For instance, Xsens suits, initially developed for analyzing human biomechanics during sports rehabilitation, have gained popularity. Nowadays, markers can function in boundless spaces and underwater, and software can identify which sensors correspond to specific bones, completing the image autonomously within game engines. This allows actors to promptly evaluate the outcome and visualize the scene more effectively. Yet, all these advancements would not be as remarkable without the key element of facial animation.

The technology for recording facial expressions, known as MotionScan, was introduced not too long ago. It utilizes smaller versions of the passive markers traditionally used in motion capture, which are attached directly to the actor’s face. The greater the number of markers, the more precise the captured image. Today, these markers are mere small dots that do not hinder the actors’ ability to express a full range of emotions. However, the initial version of MotionScan required the actor to be in a room surrounded by numerous cameras, often 32, to capture the primary emotions or a monologue. These recordings were then mapped onto a digital skeleton. Early iterations of this technology sometimes resulted in characters that appeared unnatural on screen, which is understandable considering the actor was merely sitting in a chair during the recording.

In contemporary computer games and films, it is common to combine both Motion Capture and MotionScan technologies. This fusion enables the recording of emotions and movements in real time, resulting in an exceptionally lifelike depiction. Generally, this method is referred to as Performance Capture. The entertainment industry has indeed evolved significantly. Let’s examine its key milestones.

Motion Capture in Cinema

The first movie to utilize a mockup was “Total Recall,” where Arnold Schwarzenegger’s skeleton was scanned by sensors and depicted on the screen. However, the quality was lacking, necessitating hand-drawn limbs. Subsequently, George Lucas achieved a milestone with “Star Wars | Episode I – The Phantom Menace,” presenting Jar Jar Binks as a fully digital character with as much screen presence as the protagonists. His movements and facial expressions were digitally crafted using Motion Capture technology.

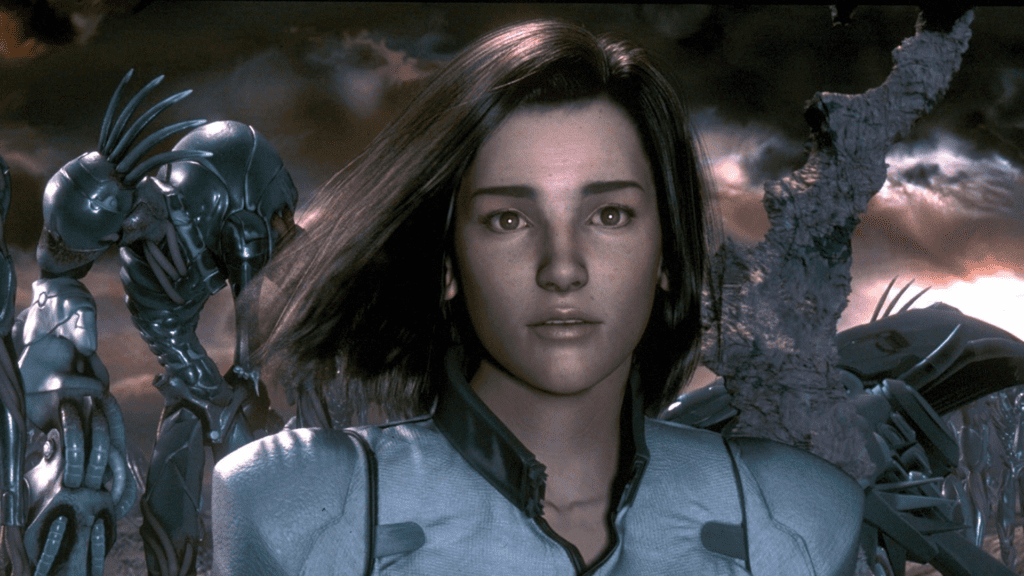

The trend also found its way into animation. Typically, it proved to be unconventional. Prominent examples are “Final Fantasy | The Spirits Within” and “The Polar Express,” where Tom Hanks portrayed up to five roles. While there were other productions, they were considerably less impactful. Gradually, the animation industry shifted from using stand-ins to computer animation and the comprehensive work of artists, yet these experiences remained telling.

Nevertheless, the crowning achievement of Performance Capture technology, which led to its widespread use, was undoubtedly Andy Serkis’s portrayal of Gollum. This character featured in the second and third installments of The Lord of the Rings trilogy, and remains impressively realistic to this day. Serkis’s formidable physique was transformed into an entirely different entity. Moreover, he personally executed every movement, voice, and facial expression.

A dedicated team of experts contributed to the creation of Gollum, showcasing the impressive capabilities of technology. This marked the beginning of a new era for visual effects. Nowadays, no Marvel movie is seen without the use of motion capture technology, and the ‘Planet of the Apes’ series, featuring Serkis, is composed of 90% such scenes. Regrettably, actors involved in Performance Capture are still ineligible for an Oscar, as the jury contends that the essence of the actor cannot be fully captured in computer-generated models. Serkis has been a prominent critic of this restriction, yet no significant change has occurred.

The film industry’s last significant milestone was James Cameron’s “Avatar.” In this film, Performance Capture technology reached its zenith, and the movie continues to look remarkable even after more than a decade.

Motion Capture in Gaming

In the realm of gaming, motion capture has been a central element of production for quite some time. Interestingly, the earliest applications of this technology were in fighting games and Prince of Persia. In 1989, Jordan Mechner employed rotoscoping to record his brother’s movements, which enhanced the fluidity of the prince’s animations. Fully realized motion capture was later showcased in titles like Soul Edge and Virtua Fighter 2. Subsequently, the Mortal Kombat series also adopted this technology.

However, the true innovation came from Quantic Dream. This studio pioneered the genre of interactive cinema, where players control actual people. Titles like Fahrenheit, Heavy Rain, Beyond | Two Souls, and Detroit | Become Human demonstrated how to utilize technology to create experiences that are beautiful, natural, and spectacular.

Meanwhile, Rockstar Games released L.A. Noire. The game’s standout feature was its focus on emotions. Players needed to observe the characters’ facial expressions to discern who was lying, made possible by the MotionScan technology. Its first application in this project left everyone absolutely astonished.

Interestingly, the acclaimed The Last of Us series did not utilize Performance Capture technology; it relied solely on motion capture, resulting in Ellie and Joel looking quite different from their voice actors. Conversely, Hideo Kojima’s Death Stranding embraced modern techniques. The extensive cutscenes, featuring famous actors performing as if in a movie or TV series, remain remarkable.

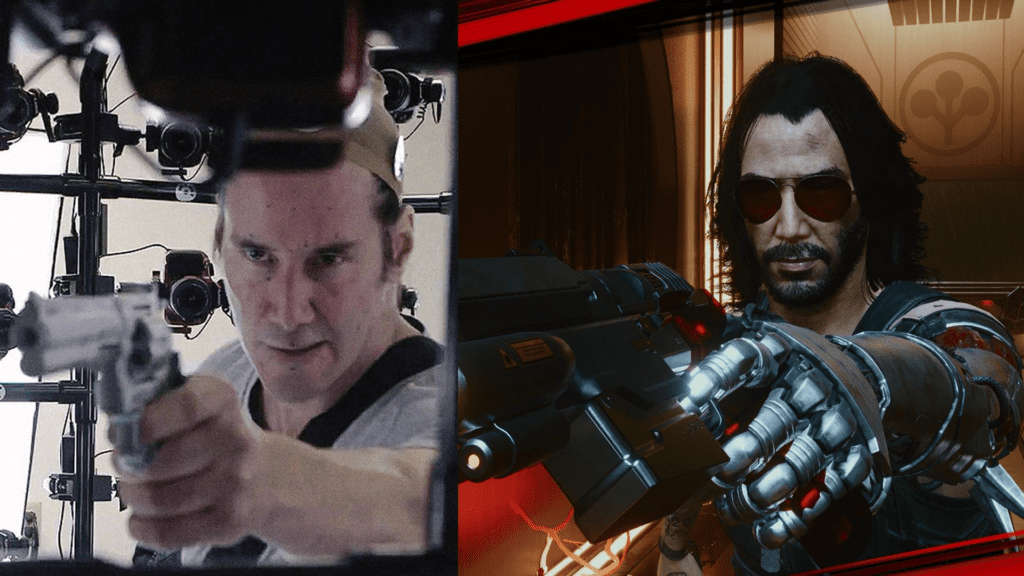

It’s important to recognize that Performance Capture remains a highly expensive and time-intensive technology. There are rumors that Cyberpunk 2077’s initial failure was due to Keanu Reeves’ late addition to the project. His character had to be integrated into nearly every cutscene, leading to a rushed final product.

Quantic Dream is facing financial challenges, as producing a large-scale interactive film today requires a significant investment, which the studio lacks. Nonetheless, there are success stories. The Star Wars Jedi series, featuring Cameron Riley Monaghan as the young Jedi Cal Kestis, showcased impressive graphics, with the protagonist not only resembling a real person but appearing exactly lifelike. The Dark Pictures Anthology series also deserves mention. While simpler and shorter than Quantic Dream’s productions, they consistently enhance the animation and the actors’ facial expressions.

It’s difficult to envision contemporary films and games without the use of Motion Capture technology. This technology has significantly blurred the lines between these two domains, and it’s certain that we’ll witness an increasing number of renowned actors in gaming ventures. Motion capture has evolved substantially and justly holds a prominent place among current visualization techniques. We eagerly anticipate the next wave of advancements, which will surely be a topic of discussion in upcoming articles.

![Behind the Scenes - Death Stranding [Making of]](https://www.aeondogma.com/wp-content/cache/flying-press/d9f7e09687d75343b9f9ec40240e6a90.jpg)

![Behind the Scenes - Prince of Persia (1989) [Making of]](https://www.aeondogma.com/wp-content/cache/flying-press/4b8d05c5efe060103c155371d1e845dd.jpg)