The last decade of PC gaming has been defined by a slow transition from raw power to synthetic trickery. A revolution in visual fidelity was promised, a leap into a world where light behaves naturally and frame rates defy the limits of hardware. Instead, an era of highly specialized features has arrived, serving the marketing department more than the actual person holding the controller.

Gamers pay a premium for technology that often requires a microscope to appreciate. The cost of entry has spiraled out of control while the tangible benefits remain locked behind layers of proprietary software and cynical hardware cycles. Progress is no longer measured by engineering efficiency. Instead, corporate growth is prioritized over the genuine needs of the gaming community.

Consumer Crossfire Acronym Warfare

The average consumer is currently caught in a crossfire of acronyms. DLSS, FSR, Ray Tracing, Path Tracing, and Frame Generation act as weapons in a brand war that leaves the payer confused and lighter in the pocket. A profound gap exists between what is demonstrated in a controlled tech demo and what the gamer actually experiences on a desk.

Most of these improvements are so subtle that they vanish during actual gameplay, yet their presence on a spec sheet justifies price hikes that would have been unthinkable a generation ago. The honesty of rasterization has been traded for the smoke and mirrors of tensor cores. We are not just paying for pixels. We are paying for a narrative.

Settings Labyrinth Diminishing Returns

Modern PC games have become a labyrinth of menus. Few specialists can explain the functional difference between various versions of image scaling without a technical manual. We have reached a point of diminishing returns where the effort required to optimize a game often outweighs the joy of playing the title. Most players select a preset and hope for the best, ignoring the intricacies of driver updates because they simply want the software to function.

There is irony in the current handling of settings. A simple example exists in a benchmark like Total War: Warhammer III. By moving sliders from maximum to minimum in a standard 1080p resolution, a player can jump from a struggling 55 frames per second to a massive 192 frames per second.

This nearly fourfold increase is achieved without a single AI tool or tensor core. The flexibility of traditional settings is enough to meet the needs of any player. Synthetic frame generation is unnecessary when the basic engine architecture is capable of massive scaling. The push for AI solutions feels like a distraction from the fundamental work of optimization.

Engineering Mastery Brute Force Failure

The industry has a short memory when a narrative suits the current hardware cycle. In 2017, the PlayStation 4 Pro demonstrated that high quality 4K gaming was possible on relatively low powered hardware through techniques like checkerboard rendering. Horizon Zero Dawn was a visual triumph proving the efficiency of smart image reconstruction.

Fast forward to the 2024 remaster. The game moved to native resolution with updated lighting and textures. Under a microscope, the changes are extensive. In the eyes of the public, the reaction was mostly indifference. The original already looked great. The perceived benefit was minimal because the human eye has limits that technical benchmarks ignore. We are being sold solutions to visual problems that most people do not notice until a marketing slide mandates closer inspection.

Upscaling Logic Development Failure

The core question of upscaling is often left unasked. Why is a picture rendered at a low resolution only to be scaled up? As a consumer, the logic feels flawed from the start. We are told that rendering at a lower resolution reduces the load on the graphics card. This is technically true. However, a way to reduce the load on a graphics card already exists | lowering the graphical settings.

Image enhancement via upscaling is essentially a crutch for a narrow range of tasks. If a game requires AI to run at an acceptable frame rate on modern hardware, that result represents a failure of development, not a triumph of technology. Consumers are paying for hardware designed to compensate for inefficient software. The cycle of waste sees the consumer paying for the transistor budget of the GPU and the development time of the upscaling algorithm just to achieve a result better code could have reached.

Path Tracing Global Hardware Tax

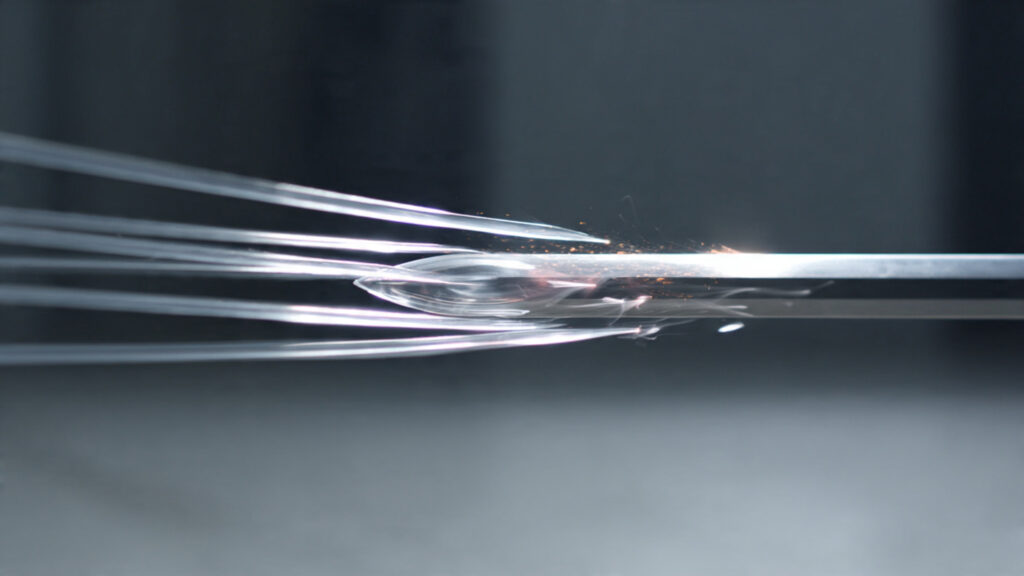

Nvidia’s current ambition centers on path tracing. This is the ultimate tool for turning even the most expensive graphics card into a struggling relic. Path tracing is the quintessence of the current marketing strategy. While technically impressive, the feature remains one with almost zero market demand. To date, full implementation exists in only a handful of titles. Most advertising for this “revolutionary” tech relies on Cyberpunk 2077, a game originally designed to run on the last generation of consoles.

The greens promote a feature that most game studios refuse to touch because the resource intensity is too high. Path tracing is a feature for the one percent of the one percent. Yet, the tech is marketed as the main driver of progress. The transistor budget is being spent on ghosts while raw performance stagnates. This marketing bullying tactic is designed to make the consumer feel that current hardware is obsolete before the box is even opened.

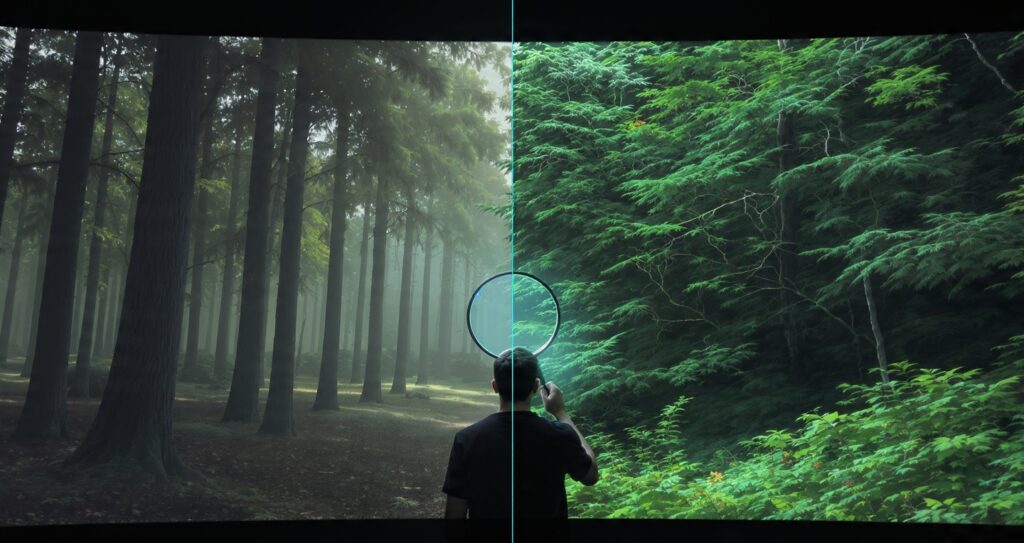

Subjective Clarity Selective Vision

The marketing narrative suggests that AI reconstruction creates a picture superior to the original. This is a beautiful fairy tale. In reality, the quality of an image is entirely subjective. A constant debate exists between those who want a sharp, high contrast image and those who prefer a softer, more natural look.

If an artist draws a tree with ten distinct twigs, a high contrast upscaler might make those twigs look like jagged wires. A softer filter might turn them into a realistic canopy. There is no objective “better” in this context. When the source material and the final resolution are the same, Nvidia’s technology often falls behind traditional rendering. Built-in upscalers only look better when a game has low quality textures that need masking. The qualitative characteristics of a picture cannot be measured by a graph, yet such measurement is exactly how these features are sold.

Ghosting Artifacts Cynical Presentations

One of the most frustrating aspects of the DLSS era is the issue of ghosting. This is a global image blurring problem occurring when temporal algorithms fail to keep up with fast motion. On the release of the Days Gone remaster, the issue was immediately apparent. The degradation was fundamental. Yet, many enthusiasts claim not to see the problems at all.

Selective vision is a powerful force when a person has invested a thousand dollars in hardware. Nvidia addressed the problem with incredible cynicism by demonstrating progress using a gray sword against a gray background. A scenario with zero contrast was chosen to hide the artifacts the technology creates. Why would someone accept a buggy AI filter rather than simply lowering graphics settings? The ego of the “Ultra” preset is a powerful marketing tool forcing players to accept a broken image just to keep a slider to the right.

Budget Segment Soap Betrayal

The true tragedy of DLSS is that the technology is most needed in the budget segment. For the gamer with an entry level card, every frame is a battle. However, the features work best on the most expensive hardware. The Tensor cores are a premium tax. On budget cards, upscaling often produces an image so blurry it becomes unplayable.

Upscaling for 4K has become mandatory because developers are no longer providing traditional anti-aliasing options. Techniques like MSAA and SMAA have vanished, replaced by “TAA” or upscaling solutions. You are forced into the “soap” regardless of preference. Clarty is no longer the goal. The goal is to hide engine inefficiencies behind a veil of synthetic pixels.

Benchmark Myth Transistor Budget

We are paying triple for the transistor budget of specialized RT and Tensor cores used in a tiny fraction of the games played. Nominally, there is progress. The benchmarks show the numbers going up. But in the real world, the experience has plateaued.

The last decade of graphics technology has been a masterclass in marketing over substance. We have allowed ourselves to be convinced that synthetic frames are just as good as real ones. We have accepted a world where our hardware is designed to fix broken games instead of running good ones. At Aeon Dogma, we see the grid for what it is. The technology is a crutch. It is a marketing myth. It is time to start demanding real progress instead of just better fairy tales.

TECHNICAL ADDENDUM | THE SILICON TAX AUDIT

The current 2026 hardware landscape shows a significant divergence between theoretical peak performance and actual gaming utility. This breakdown compares the raw transistor allocation of a modern “Green” flagship versus a traditional rasterization-focused architecture.

1 | Transistor Allocation Divergence

On a standard modern GPU die, approximately 25% to 30% of the total transistor budget is now dedicated specifically to RT (Ray Tracing) and Tensor (AI) cores.

- The Opportunity Cost | If these transistors were reallocated to standard CUDA or Compute Units, raw rasterization performance would increase by an estimated 35% to 40% without needing any upscaling.

2 | The Power Efficiency Paradox

AI-driven Frame Generation requires the GPU to keep specialized hardware active even when the screen is relatively static.

- The Data | Using Frame Generation increases total board power (TBP) by roughly 15 to 25 Watts to calculate “fake” frames. In contrast, lowering a single shadow setting reduces power consumption while providing a genuine latency benefit.

3 | Latency Penalty vs Synthetic Gain

While DLSS Frame Generation increases the “Visual Frame Rate” on a counter, it does not improve “Input Latency.”

- The Reality | A game running at 60 FPS (Native) feels more responsive to the human hand than a game running at 90 FPS (Synthetic) because the AI frames are inserted after the input has been processed.

4 | Memory Bandwidth Bottlenecks

Upscaling technology relies heavily on temporal data (information from previous frames). This puts an immense strain on VRAM bandwidth.

- The Result | Modern cards are often limited by their memory bus width rather than their core speed. We are paying for faster cores that are being “starved” of data because the upscaling algorithm is clogging the memory lanes.