The history of gaming is a digital chronicle of broken barriers. Every time a new technological spike hits the mainframe, the industry reacts with a mix of neon-soaked delight and a cold, silent horror. Today, we are standing on the edge of the most significant existential glitch yet. Generative artificial intelligence has moved from a curiosity in the dark corners of the web to a full-fledged operator in the creative workflow.

The question vibrating through the circuits is simple yet heavy | Are we ready to accept neural networks as the new apprentice of the artist, or should we declare an all-out war on the machine to protect the last bastions of the human soul?

The Ancient Fear of the New Architecture

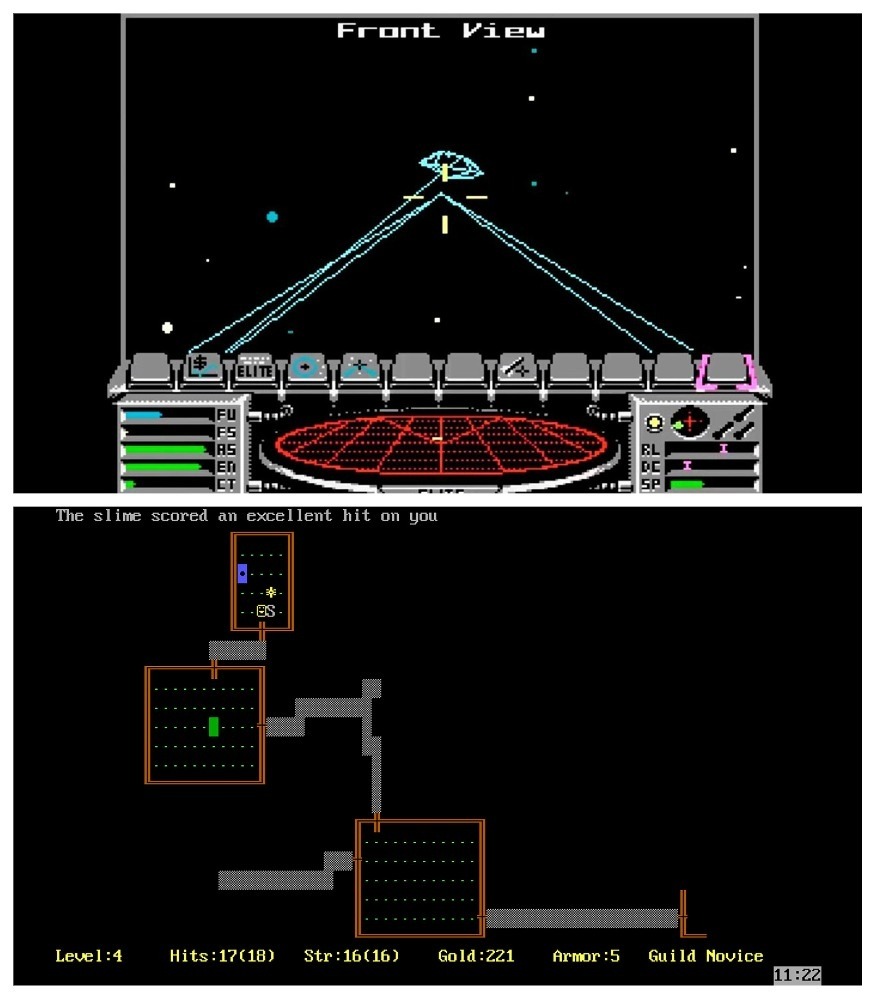

Once upon a time, the idea of a computer building a world was considered witchcraft. When Rogue (1980) and Elite (1984) introduced procedural generation, players felt a superstitious awe. It was the first time the industry realized that the machine could take on the role of an architect, provided a human was holding the blueprint.

Today, the cycle repeats at a deeper level. Modern game development has become a production of staggering complexity. The cost of a single bug can reach millions, and the development cycle of a triple-A project can stretch across a decade. In this high-stakes environment, a tool that generates textures, sketches concept art, or drafts code in seconds looks like a digital savior.

However, that efficiency is exactly what triggers the anxiety. There is a fear of “impersonal” content. The gaming community has always been sensitive to the concept of the “author’s vision.” We don’t just see pixels; we see the thousands of micro-decisions made by real people. The moment a generative model enters the stream, it creates an illusion of substitution. If a machine can mimic the style of a legendary screenwriter, does the magic of the craft lose its value?

The Blurred Line of Optimization

We need to address the glitch in this skepticism. The line between human labor and algorithmic optimization was blurred years ago. Game development has always been a history of automation. Developers haven’t drawn every single pixel by hand for decades. We don’t manually calculate the physics of every leaf falling from a tree in a virtual forest. We have engines, physics middleware, and complex simulation systems for that.

The current conflict isn’t really about the tech; it’s about the label. The audience is often willing to forgive a game for being repetitive or derivative as long as it was made the “traditional” way, but they are ready to burn an innovative project at the stake if they even suspect an AI was involved. This stigmatization of progress creates a dangerous precedent where fear begins to dictate the rules of the entire grid.

The Mechanical Heart of the Indie Survival

For the independent rebels, these technologies are a matter of basic survival. We have entered the era of “large-scale content,” where even small indie projects are expected to deliver thousands of lines of dialogue, high-fidelity textures, and seamless open worlds. A team of three to five people cannot compete with a corporate army of outsourcers if they are chained to 1990s production methods.

Neural networks have become the “mechanical heart” that allows these projects to live.

- Chrome Cache Tools: Studios are using ElevenLabs for rough voice acting, saving thousands in studio sessions.

- Visual Synthesis: Tools like MeshyAI or Stable Diffusion are being used to generate complex 3D assets and textures.

When a solo developer creates a masterpiece like Liar’s Bar or My Summer Car, they are forced to delegate the routine tasks to the algorithms. This allows them to focus on what actually matters | the direction, the balance, and the spark of madness that makes the game worth playing. Banning these tools for indies would be like banning Unity or Unreal. It wouldn’t protect art; it would just ensure that only the ultra-wealthy can afford to make it.

The Digital Inquisition and the Expedition 33 Incident

The case of Clair Obscur | Expedition 33 from the French studio Sandfall Interactive is the watershed moment for this discussion. In late 2025, the industry watched as the Indie Game Awards withdrew the “Game of the Year” and “Debut of the Year” awards from the project.

The reason? Several neural network textures were discovered. They were used as temporary stubs during the early dev stages and accidentally left in the final release version. Even though the studio patched them out immediately, the digital inquisition had already started. This revealed a deep systemic flaw in our current perception.

Zero tolerance for AI has morphed from protecting artists into a tool of punitive censorship. By punishing Sandfall, the industry essentially banned an effective method of finding a visual style. It’s a “cancel culture” for tools, ignoring the fact that placeholders have always been the fuel for the final, hand-crafted product.

We saw a similar glitch with The Alters from 11 bit studios. Players found a chatbot’s “Of course! Here is a revised version” postscript in a captain’s log. Even though AI only accounted for 0.3% of the 3.4 million words in the game, the reputational damage was immediate. According to a study by Totally Human, about 20% of games released on Steam in 2025 use AI, but almost no one admits it. We have created an environment where honesty is punished and the truth is buried under the floorboards.

The Copyright Crisis and the Search for Authenticity

The primary legal complaint against neural networks is rooted in ethics. These models are trained on datasets that often include the work of creators who never consented to be part of the machine’s library. This has led to a massive identity crisis. If the visual “calling card” of a game is formed by an algorithm analyzing other people’s masterpieces, can it still be called authentic?

This puts massive publishers like Ubisoft or Square Enix at risk. Why invest millions in a project if the legal ownership is a gray area? To counter this, companies are now investing in “ethically pure” datasets, trained only on their internal archives. This is an attempt to legalize the tech without triggering a war with the professional community.

Narrative Neural Links and the Ghost Scriptwriters

When it comes to the story, the debate gets even more personal. We are all reading more carefully now, looking for the specific “flavor” of a neural network in every noun and adjective. In massive projects where you need thousands of unique NPC greetings or item descriptions, AI is a perfect assistant. It fills the “lore” gaps that 90% of players usually skip.

However, there is a risk of “semantic noise.” AI cannot yet handle deep irony or the subtle metaphor required to capture true human pain. The danger here is “deceptive adequacy.” A screenwriter might see an AI draft that “looks normal,” and human laziness might prevent them from refining it. But a total ban on AI scripts would be a step backward into static, predictable worlds. AI can help a writer break through a creative block, offering a thousand variations of a fantasy setting to help them find the one that isn’t a cliché.

The Gilded Crutch of the AI Programmer

The situation with code is perhaps the most threatening. Neural networks have turned “copy-paste” into a high art form. Code is often written now without a fundamental understanding of the architecture, but by compiling hints from the algorithm.

- The Optimization Abyss: AI writes code for the “now,” not the “long-term.” This leads to games being built on “crutches” that can collapse if one generated fragment fails.

- The Prompt Engineer Trap: A generation of developers is growing up unable to code from a blank slate. If the grid ever goes down for a day, the industry might just stop breathing.

The Consensus Search for a Digital Compromise

Banning neural networks at a state or platform level is a catastrophic move. It creates a legal imbalance where developers in “flexible” countries will simply out-produce and out-innovate everyone else. The solution isn’t to taboo the tool, but to regulate the process.

The Transparency Protocol:

- Mandatory Declarations: Just like the food industry lists ingredients, studios should be honest about how much AI was used. “We used AI for environmental textures to save budget for unique facial animations” is a management win, not a cheat.

- Ethical Datasets: The future belongs to closed databases and legally purchased training content. This turns the AI from a thief into a high-tech mirror reflecting the team’s own style.

- Human-in-the-Loop: Professional communities must insist on human control at every stage. The machine can suggest a thousand swords, but a human must choose the one that becomes a legend.

We once looked at neural networks as a curious fashion trend, like a new version of Photoshop. The reality is far more interesting. The silicon soul is here, and it’s not leaving. We just have to decide who is holding the leash.